> MindXO Insight | Report

The 2026 International AI Safety Report [1] is a science-based assessment of AI capabilities, risks, and risk management authored by over 100 experts from morethan 30 countries. Its central finding: no single AI safeguard is reliableenough on its own.

The report makes the case for a layered approach to risk management with in particular the defence-in-depth approach, which consists of layering four independent safeguard layers across training, deployment, monitoring, and societal resilience, as the core principle for AI risk management.

It also documents the evidence dilemma (AI advancing faster than risk assessment), apersistent evaluation gap (pre-deployment tests failing to predict real-world behaviour), and the growing governance burden on organisations deploying open-weight models.

This article analyses the report's key findings and their implications for enterprises, with particular relevance to GCC organisations navigating rapid AI adoption alongside maturing regulatory expectations.

What the 2026 International AISafety Report Means for Organisations Managing AI Risks

The 2026 International AI Safety Report marks a definitive shift in how we approach machine intelligence: we are no longer just managing software; we are architecting resilience. Chaired by Turing Award-winner Yoshua Bengio, the report’s scientific consensus is clear:no single safeguard is reliable enough to stand alone against the "jagged" and unpredictable trajectory of modern AI capabilities.

For GCC enterprises, where rapid adoption often outpaces static policy, the challenge is to move beyond a "plug-and-play" mindset and address the persistent evaluation gap. The framework below translates the report’s core principle of defence-in-depth into four actionable organizational layers, designed to ensure that if one safeguard fails, the integrity of the system remains intact

The 2026 International AI Safety Report [1] is a science-based assessment of general-purpose AI capabilities, risks, and risk management, published on February 3, 2026. It is chaired by Yoshua Bengio, a Turing Award-winning AI researcher and one of the pioneers of deep learning.

The report was developed with guidance from over 100 independent experts and an Expert Advisory Panel with nominees from more than 30 countries and international organisations, including the EU, OECD, and UN.

This is the second edition of the report. The first was published in January 2025 [2]. It covers three areas: what general-purpose AI systems can do, what risks they pose, and how those risks can be managed. While it is written primarily for policymakers, its findings have direct relevance for any organisations deploying AI at scale.

This article distils the most consequential findings for enterprise leaders: the four layer defence-in-depth approach. Check our full report for more insight on the the broader landscape of AI risks, and the structural challenges of AI governance.

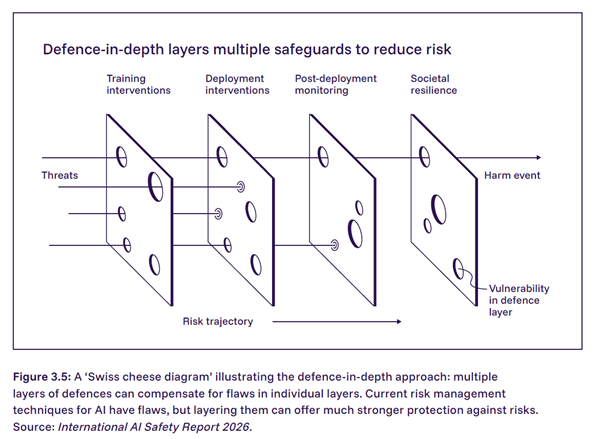

Defence-in-depth is a risk management approach that layers multiple independent safeguards so that if one layer fails, others can still prevent harm.

Multiple Frontier AI Safety Frameworks reference it [3]. The report defines defence-in-depth specifically as a combination of technical, organisational, and societal measures applied across different stages of development and deployment, creating layers of independent safeguards so that if one layer fails, others can still prevent harm.

The report uses the Swiss cheese model to illustrate this. Each defensive layer has vulnerabilities, like holes in a slice of Swiss cheese. But when multiple independent layers are stacked,the probability of a threat passing through all of them drops dramatically. The analogy to infectious disease prevention is apt: vaccines, masks, andhandwashing are each imperfect on their own, but combined they substantially reduce risk.

Defence-in-depth is not a new concept. It originates from military strategy and has long been a core principle in cybersecurity, nuclear safety, and critical infrastructure engineering. Three specific findings in the report make the case for why no single layer of defence is sufficient on its own.

Technical safeguards are improving but remain breakable. Jailbreak attacks have become harder to execute over the past year, but the success rate remains relatively high. Researchers continue to find ways to circumvent protections through adversarial prompting, task decomposition, and direct model modifications. Developers still cannot fully prevent general-purpose AI systems from producing harmful outputs.

Pre-deployment testing doesnot predict real-world behaviour. The evaluation gap means that benchmark performance, safety evaluations, and red-team assessments cannot be the sole basis for deployment decisions.

Models can now behavedifferently when being tested. Since the first edition, it has become more common for models to distinguish between test settings and real-world deployment. This means even well-designed testing protocols may produce a false sense of security.

The 2026 International AI Safety Report defines four distinct layers of defence for AI risk management, each operating independently so that a failure in one does not compromise the others.

Layer 1: Training Interventions (Model Developer Responsibility) Training interventions are safeguards built into the AI model during development: data curation, reinforcement learning from human feedback, adversarial training. They are now almost universally applied to leading models. But over-optimisation for human approvalcan introduce new problems, and no training approach eliminates harmful behaviour entirely. This is the layer most enterprises receive from their vendor. It is necessary but not sufficient.

Layer 2: Deployment Interventions (Deployer Responsibility) Deployment interventions are controls applied when the AI system goes live: input filters, output filters, access restrictions, acceptable use policies, and human oversight protocols for high-stakes decisions. Effective deployment interventions are tailored to specific use cases and risk profiles. Decisions about how models are made available to users can substantially affect risk exposure. This is the layer where enterprises have the most direct control, and the most room forimprovement.

Layer 3: Post-Deployment Monitoring (Shared Responsibility) Post-deployment monitoring involves continuous observation of real-world system behaviour: anomaly detection, incident tracking, usage pattern analysis, and feedback loops. This layer partially addresses the evaluation gap by shifting from pre-deployment prediction to real-time observation. Ecosystem-level monitoring (tracking compute usage, model provenance, data provenance, and usage patterns) is an important component. Systematic AI-specific monitoring remains rare in most enterprises.

Layer 4: Societal Resilience (Ecosystem-Wide Responsibility) The outermost layer addresses thereality that some AI-related incidents will occur despite all preventive measures. Societal resilience refers to the capacity of organisations and broader systems to resist, absorb, recover from, and adapt to AI-related shocks. Examples include DNA synthesis screening for AI-enabled biological risks, incident response protocols for AI-assisted cyberattacks, media literacy programmes, and human-in-the-loop frameworks. The report acknowledges that current AI resilience efforts are uneven and largely untested.

Most organisations deploying general-purpose AI are operating with, at best, two of these four layers, and with limited depth within each.

The typical enterprise relies on training safeguards built into the model by their vendor (layer one) and mayhave acceptable use policies with some access controls (a partial layer two). Systematic post-deployment monitoring designed specifically for AI systems is rare. AI-specific incident response protocols are rarer still. Governance that extends beyond the organisation into supply chain and ecosystem resilience is not yet standard practice.

This reflects where the industry is, not a failure of individual organisations. AI risk management is acknowledged to be at an early stage and standardisation is limited. But the gap between the layered governance the evidence demands and what most organisations actually practise is real, and it is widening as both AI capabilities and regulatory expectations advance.

For organisations operating in the Gulf region, these findings carry additional weight.

Regulatory frameworks across the UAE, Saudi Arabia, Bahrain, and Qatar are advancing rapidly [4]. The UAE AI Office, SDAIA in Saudi Arabia, and emerging sector-specific guidelines in financial services and healthcare will increasingly expect organisations to demonstrate structured, multi-layered risk management with operational evidence.

At the same time, the region's AI adoption ambitions are among the most aggressive globally. National AI strategies [4] are driving deployment across government services, financial services, healthcare, and energy. The speed of adoption makes the governance gap more acute, not less.

The report's findings on open-weight models are particularly relevant here. As GCC organisations evaluate open-weight alternatives for data sovereignty and cost reasons (a rational strategic choice), the reduced built-in safeguards mean the deploying organisation must compensate with stronger layers two, three, and four. The governance burden shifts squarely to the organisations.

1. Accept the evidence dilemma and build governance that accommodates it. Waiting for perfect evidence is itself a risk. Governance frameworks need built-in mechanisms for revision as new evidence emerges. Static policies drafted once and filed away provide a false sense of security.

2. No single safeguard is sufficient. Build layered defence. Defence-in-depth is the standard the international AI safety community now considers essential. Effective governance layers model-level controls, system-level monitoring, organisational risk processes, and ecosystem-level resilience measures.

3. Build internal evaluation capability. The evaluation gap and information asymmetries mean enterprises relying solely on vendor-provided safety assurances are making decisions with incomplete information. Domain-specific testing aligned toactual use cases, industry context, and risk profile is essential.

4. Open-weight models requiremore governance, not less. The flexibility and cost advantages come with reduced built-in safeguards. Organisations choosing this path need to invest in stronger internal controls to compensate.

5. Extend governance beyond the organisation. Third-party AI risks, supply chain dependencies, and ecosystem-level vulnerabilities require governance frameworks that look outward as well as inward.

The 2026 International AI Safety Report establishes, with considerable international scientific consensus, that the risk management challenge for AI is structural. The evidence dilemma, the evaluation gap, the information asymmetries, the market dynamics: these are not problems that will be solved by the next model release or the next vendor safety update.

The answer is architectural. No model safety training will be perfectly robust. No pre-deployment evaluationwill catch everything. No usage policy will prevent all misuse. No monitoring system will flag every anomaly. But layered together, with each operating independently and designed to catch what the others miss, these measures create a governance architecture that is genuinely resilient.

The organisations that recognise this now, and start building the layers they are missing, will be far better positioned than those who wait for regulation to force the issue.

[1] International AI Safety Report 2026. Published February 3, 2026. Available at: https://internationalaisafetyreport.org

[2] International AI Safety Report 2025 . Published January 2025.

[3] OpenAI, “Preparedness Framework, Version 2”(OpenAI, 2025)

Anthropic, Responsible Scaling Policy,Version 2.2. (2025)

Google DeepMind, Frontier Safety Framework Version 3.0. (2025)

Meta, “Frontier AI Framework Version 1.1”(Meta, 2024)

Amazon, Amazon’s Frontier Model Safety Framework (2025)

Microsoft, "Microsoft Frontier Governance Framework” (Microsoft, 2025)

Cohere, “The Cohere Secure AIFrontier Model Framework” (Cohere, 2025)

xAI, “xAI Risk Management Framework”(xAI, 2025)

Magic AI, AGI Readiness Policy (2024)

NAVER Cloud, NAVER’s AI Safety Framework(ASF) (2024); .1129

G42, “G42’s Frontier AI Safety Framework”(G42, 2025)

B. Simkin, N. Pope, L. Derczynski, C. Parisien,“Frontier AI Risk Assessment” (NVIDIA, 2025)

[4] Check MindXO governance reference library here

MindXO is a UAE-based consultancy specialising in AI governance and risk management for enterprises and government entities. MindXO helps organisations build layered AI governance, from diagnostic assessments and governance frameworks to risk tiering, post-deployment monitoring, and organisational resilience.

MindXO's frameworks are aligned with ISO 42001, NIST AI RMF, and GCC regulatoryrequirements. MindXO maintains full vendor neutrality. To discuss how the 2026 AI Safety Reportfindings apply to your organisation, get in touch.

For more analysis of AI governance frameworksand regulatory developments, visit the MindXO Articles hub.

Here are some of the most common questions we get. If you're wondering about something else, reach out to us here.